Learned Visual Navigation for

Under-Canopy Agricultural Robots

|

Arun Narenthiran Sivakumar1

|

Sahil Modi1

|

Mateus Valverde Gasparino1

|

Che Ellis2

|

|

Andres Eduardo Baquero Velasquez1

|

Girish Chowdhary1*

|

Saurabh Gupta1*

|

|

1 University of Illinois at Urbana-Champaign

|

|

Correspondence to {av7, girishc}@illinois.edu

|

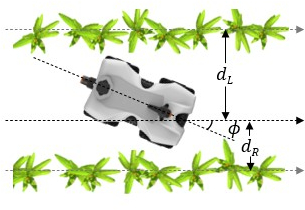

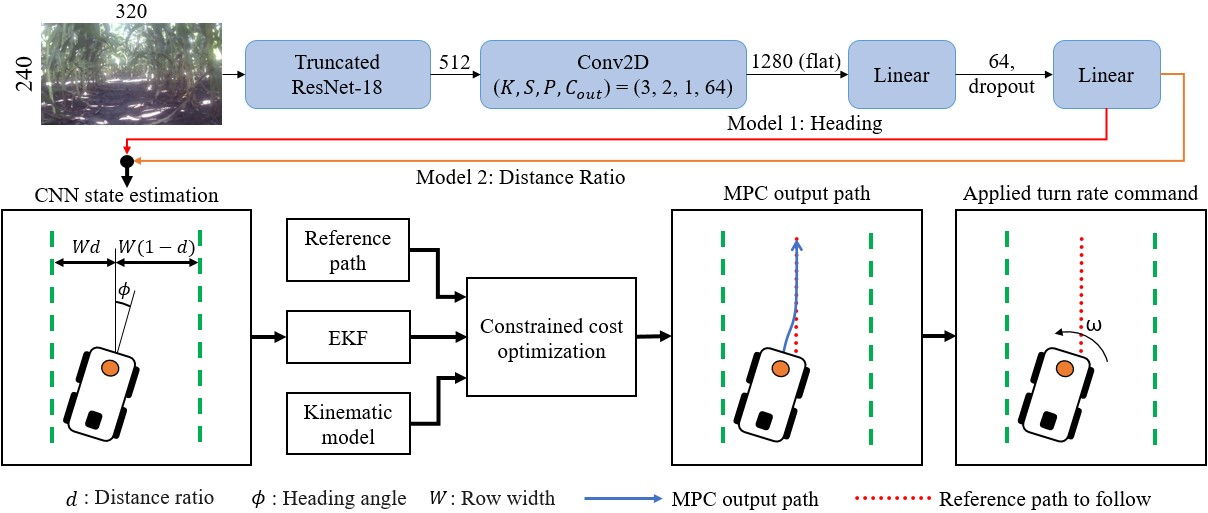

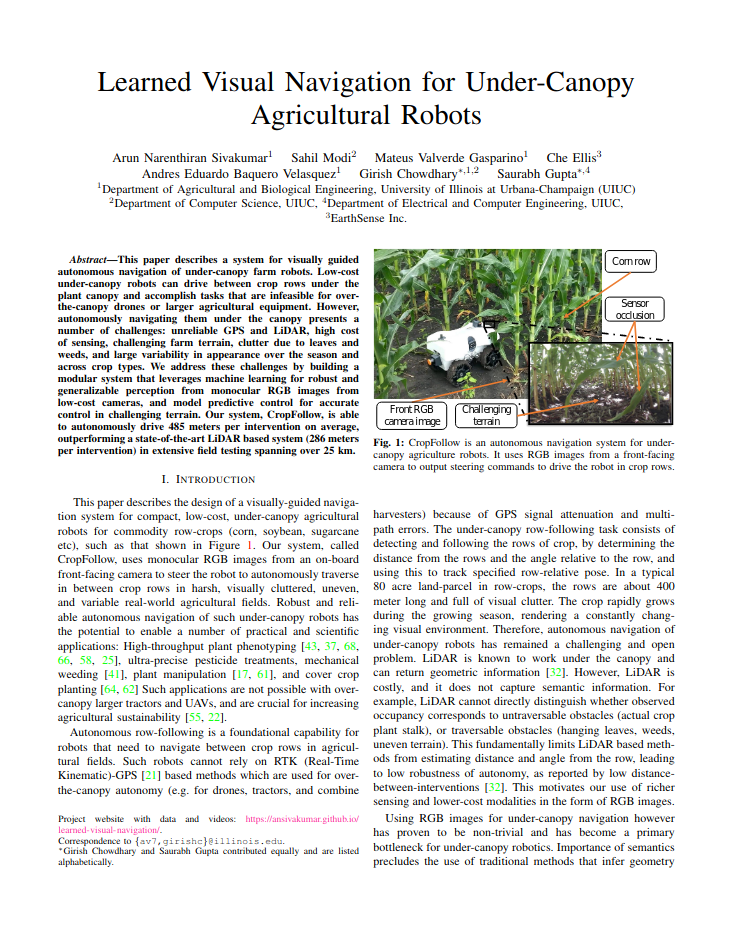

This paper describes a system for visually guided autonomous navigation of under-canopy farm robots. Low-cost under-canopy robots can drive between crop rows under the plant canopy and accomplish tasks that are infeasible for over-the-canopy drones or larger agricultural equipment. However, autonomously navigating them under the canopy presents a number of challenges: unreliable GPS and LiDAR, high cost of sensing, challenging farm terrain, clutter due to leaves and weeds, and large variability in appearance over the season and across crop types. We address these challenges by building a modular system that leverages machine learning for robust and generalizable perception from monocular RGB images from low-cost cameras, and model predictive control for accurate control in challenging terrain. Our system, CropFollow, is able to autonomously drive 485 meters per intervention on average, outperforming a state-of-the-art LiDAR based system (286 meters per intervention) in extensive field testing spanning over 25 km.

We use a convolutional network to output robot heading and placement in row. This is used to compute the row center which is used as a reference trajectory. A model predictive controller converts reference trajectories to angular velocity commands.

Paper

|

|

Arun Narenthiran Sivakumar, Sahil Modi, Mateus Valverde Gasparino, Che Ellis, Andres Eduardo Baquero Velasquez, Girish Chowdhary, Saurabh Gupta.

Learned Visual Navigation for Under-Canopy Agricultural Robots.

RSS 2021.

@INPROCEEDINGS{Sivakumar-RSS-21,

AUTHOR = {Arun Narenthiran Sivakumar AND Sahil Modi AND Mateus Valverde Gasparino AND Che Ellis AND Andres Eduardo {Baquero Velasquez} AND Girish Chowdhary AND Saurabh Gupta},

TITLE = {{Learned Visual Navigation for Under-Canopy Agricultural Robots}},

BOOKTITLE = {Proceedings of Robotics: Science and Systems},

YEAR = {2021},

ADDRESS = {Virtual},

MONTH = {July},

DOI = {10.15607/RSS.2021.XVII.019}

}

|

Acknowledgements

This paper was supported in part by NSF STTR #1820332, USDA/NSF CPS project #2018-67007-28379, USDA/NSF AIFARMS National AI Institute USDA #020-67021-32799/project accession no.1024178, NSF IIS #2007035, and DARPA Machine Common Sense. We thank Earthsense Inc. for the robots used in this work and we thank the Department of Agricultural and Biological Engineering and Center for Digital Agriculture (CDA) at UIUC for the Illinois Autonomous Farm (IAF) facility used for data collection and field validation of CropFollow. We thank Vitor Akihiro H. Higuti and Sri Theja Vuppala for their help in integration of CropFollow on the robot and field validation.

Website template from here.

|